The Bill You Didn't Know You Were Paying

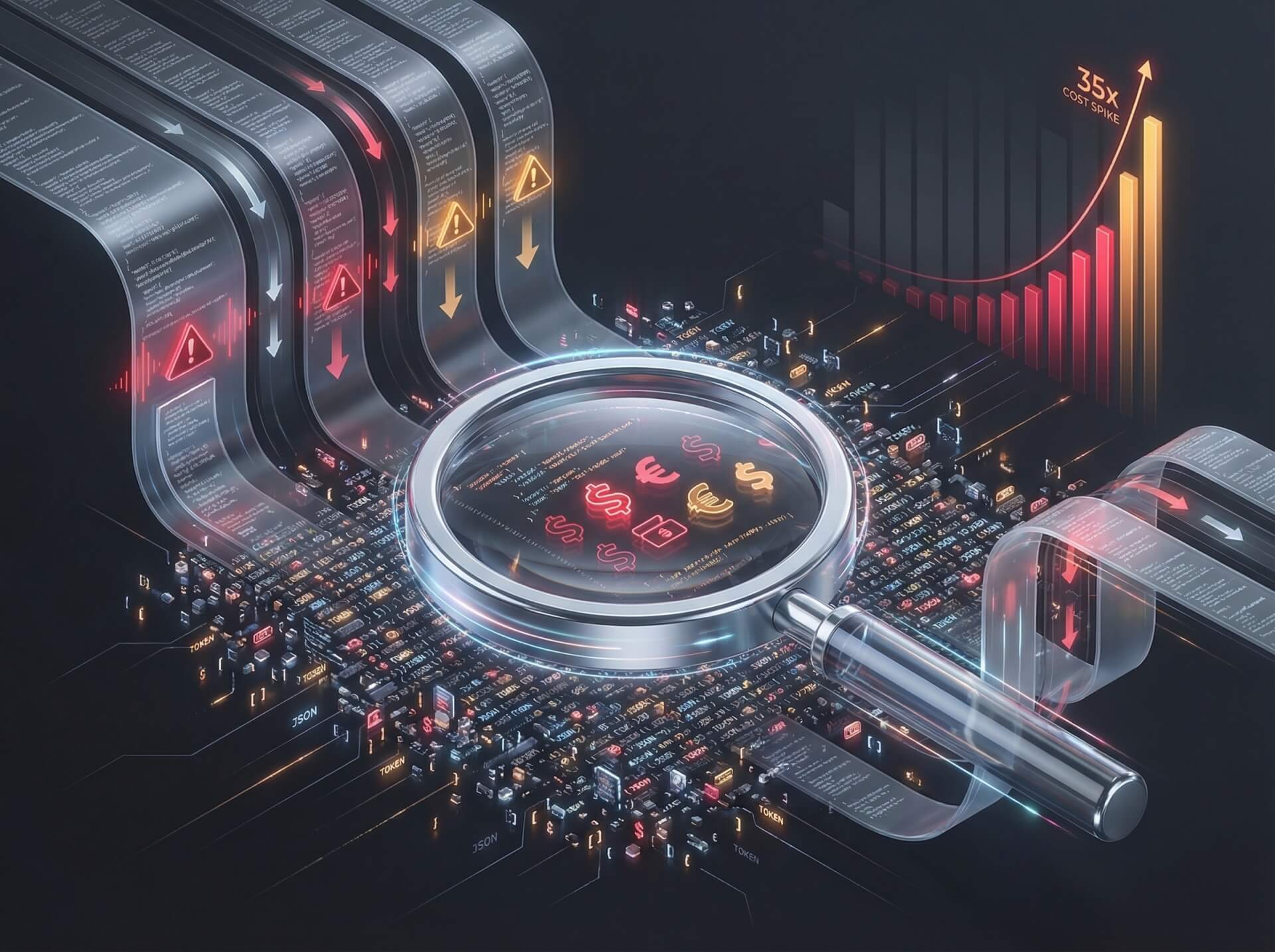

Model Context Protocol (MCP) has become the default way AI agents discover and invoke external tools. The developer experience is seamless: connect a server, and the agent instantly knows what tools are available, what parameters they accept, and how to call them. But that convenience has a price — one that shows up not in your server logs, but in your token count.

Each MCP tool definition costs between 550 and 1,400 tokens. That might sound manageable in isolation. It is not.

The Numbers That Should Worry You

A typical OpenClaw setup connects to three MCP servers exposing roughly 40 tools in total. At ~1,000 tokens per tool definition, that is 55,000 tokens consumed before the agent reads a single word of the user's message. In one documented case, three MCP servers injected 143,000 tokens into a 200,000-token context window — devouring 72% of available capacity with nothing but schema definitions.

A benchmark study by Scalekit using Claude Sonnet 4 put the disparity in sharp relief. A simple task — checking a repository's primary programming language — consumed:

| Method | Tokens Used |

|---|---|

| CLI | 1,365 |

| MCP | 44,026 |

The 43 tool definitions loaded by MCP were the culprit. The agent used one or two of them. It paid for all forty-three.

Root Cause: The Schema Tax

MCP's design philosophy prioritizes flexibility and discoverability. Every connected server dumps its complete tool schema into the conversation context upfront — parameter names, types, descriptions, enum values, nested objects — regardless of whether the agent will ever call those tools. This creates three compounding costs:

- Per-conversation overhead. The definitions inject into every conversation, every time. A user asking a simple question pays the same schema tax as a user running a complex multi-tool workflow.

- Context pressure. Every token spent on schemas is a token unavailable for conversation history, retrieved documents, and — critically — the agent's own reasoning. Compressed context means degraded output quality.

- Direct dollar cost. Tokens are money. A 35x multiplier on a single operation translates directly to API bills that scale far faster than expected.

Three Strategies to Reclaim Your Tokens

The solution is not to abandon MCP. It is to stop paying for tools you are not using.

1. Dynamic Tool Loading

Instead of injecting every tool definition at conversation start, load them on demand. The agent begins with a lightweight tool registry — essentially an index of available tool names and one-line descriptions. When the agent decides it needs a specific tool, it fetches the full schema at that moment.

MCP maintainers have confirmed this pattern is gaining traction in the ecosystem. The trade-off: you need to build and maintain a registry layer with search logic, and the agent incurs a small latency cost on first use of each tool. For deployments with more than 15–20 tools, the token savings far outweigh the added complexity.

2. CLI-First for Known Operations

If the agent already knows which command to run, a CLI call with a concise help string is dramatically cheaper than a full MCP schema. The Scalekit benchmarks consistently showed CLI consuming fewer tokens for single-step, well-defined operations.

This approach works best when:

- The task is a known, repeatable operation (file reads, git commands, API calls with stable parameters).

- The agent does not need to discover what tools exist — it already knows.

It is less suited for exploratory workflows where the agent must browse available capabilities before deciding on an action.

3. Code Mode: Let the Agent Write the Orchestration

The most token-efficient approach for complex workflows is Code Mode — the agent writes a script that calls MCP tools programmatically rather than invoking them one by one through the protocol layer.

A Sideko benchmark across 12 Stripe integration tasks measured the difference:

| Mode | Token Efficiency vs. Raw MCP |

|---|---|

| Raw MCP | Baseline |

| CLI | 44% fewer tokens |

| Code Mode | 58% fewer tokens |

On multi-step workflows the efficiency gains were even more pronounced, because the agent amortizes tool invocation overhead across a single script execution rather than paying it on every individual call.

How to Audit Your Setup Right Now

A quick back-of-envelope calculation:

- Count the total number of MCP tools loaded across all connected servers.

- Multiply by ~1,000 tokens per tool.

- Divide by your model's context window size.

If the result exceeds 30% of available context, you are leaving performance and money on the table. Prioritize optimization starting with the highest-token-count servers.

The Right Tool for the Right Job

The takeaway is not that MCP is broken — it is that MCP is being used as a universal hammer when the toolbox contains better options for specific nails.

- Use MCP when an agent needs to explore and discover capabilities it has not encountered before.

- Use CLI when the agent knows exactly what to run and the operation is a single step.

- Use Code Mode when the workflow involves multiple steps, conditional logic, or loops.

Match the invocation method to the task. Your context window — and your invoice — will thank you.